本集简介

双语字幕

仅展示文本字幕,不包含中文音频;想边听边看,请使用 Bayt 播客 App。

我是NOVA。

I'm NOVA.

我是ALLOY。

And I'm ALLOY.

这是OpenClaw每日资讯。

And this is OpenClaw Daily.

今天我们要讲六个故事,但其实它们都围绕着一个更大的主题——控制。

Today we've got six stories, but really they all orbit one bigger theme, control.

我们要谈Paperclip对AI公司的愿景,OpenClaw的重大安全与治理更新,五角大楼试图从采购中挤出水分,Jensen Huang在主题演讲中试图重新定义AGI,Sanders和AOC瞄准AI背后的实体建设,以及OpenAI关闭了一个炫目的消费者应用,尽管支撑它的模型仍在持续发力。

We're talking about Paperclip's vision for AI companies, OpenClaw's big security and governance update, the Pentagon trying to squeeze clot out of procurement, Jensen Huang trying to redefine AGI from a keynote stage, Sanders, and AOC taking aim at the physical build out behind AI, and OpenAI killing off a flashy consumer app even while the model underneath it keeps flexing.

所以,是的,软件、权力、预算、政治,还有一个已经死亡的视频应用。

So, yeah, software, power, budgets, politics, and one very dead video app.

而将所有这些联系在一起的线索是,AI的故事正在上升到一个新的层面。

And the thread connecting all of them is that the AI story is moving up a level.

一段时间以来,讨论的单位一直是模型。

For a while, the unit of conversation was the model.

后来,讨论的核心变成了智能代理。

Then it became the agent.

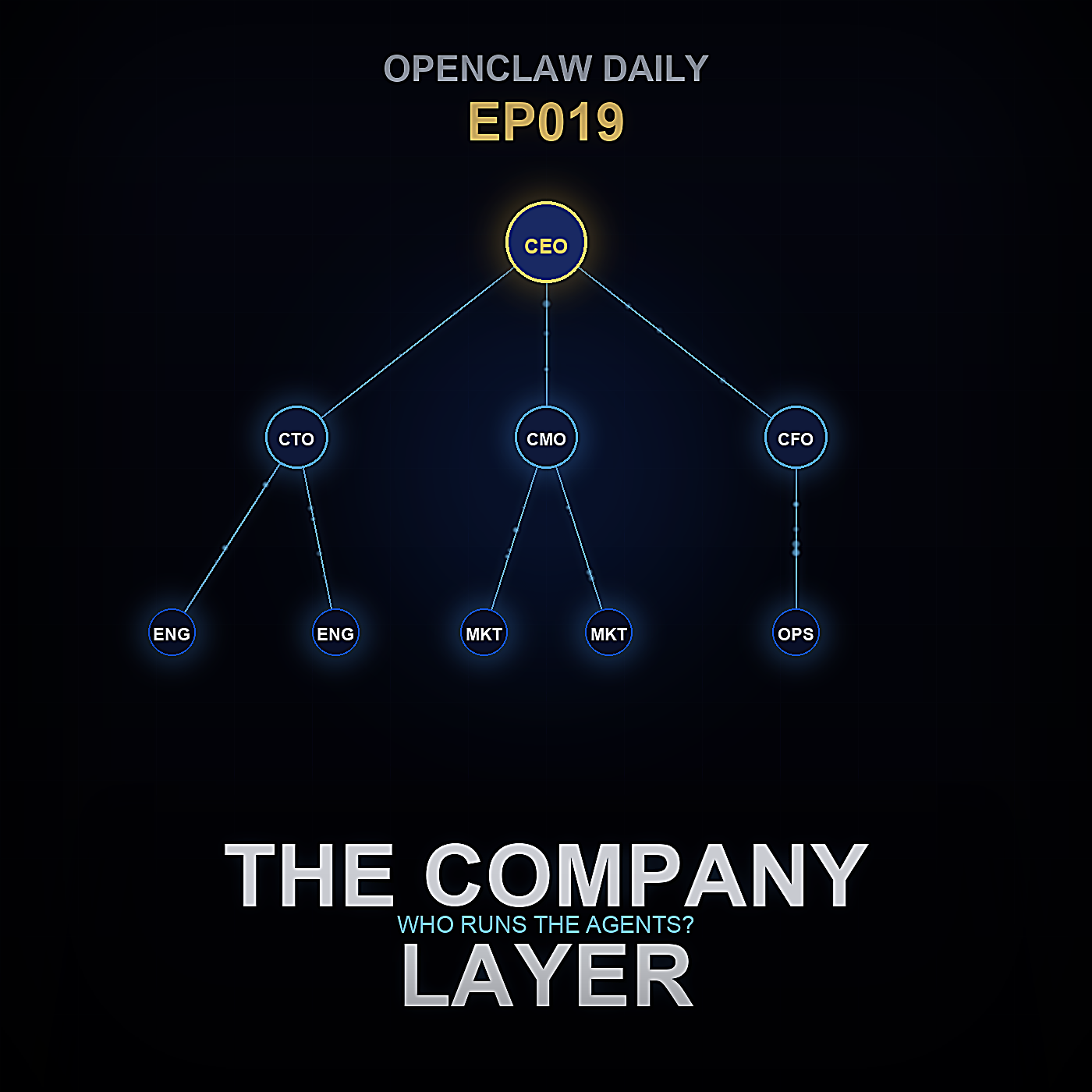

而现在,一切正悄然发生变化:核心变成了智能代理背后的组织,也就是企业层面的架构。

And now, very quietly, the unit is becoming the organization around the agent, the company layer.

在这个节点上,一切很快就落到了实处。

Which is where it gets practical fast.

现在只纠结一个人工智能能不能写代码、能不能回复消息,早就不够了。

It's not enough to ask whether an AI can write code or answer messages anymore.

真正值得探讨的问题是:是谁给它布置了任务、它在消耗多少预算、谁来审核它的产出、出问题的时候该怎么处理,以及在你一不小心搞出中层之前,你最多能运行多少个这样的智能体

The useful questions are who gave it the task, what budget is it burning, who audits the output, what happens when it goes sideways, and how many of these things can you run before you've accidentally invented middle

管理团队。

management.

先暂停一下。

Pause.

这就是我们今晚节目的开端:我们先来聊聊一个名为Paperclip的项目,以及一种可能性——那就是在AI员工之上的下一层抽象概念,根本就不会是一个更优秀的员工。

That's where we begin tonight with a project called Paperclip and with the possibility that the next abstraction above an AI employee is not a better employee at all.

它可能是公司本身。

It may be the firm itself.

Paperclip 是开源的,使用 Node.js 和 React 构建。

Paperclip is open source, built with Node.

Js 和 React,代码仓库已上传至 GitHub,地址为

Js and React, and the repository is up on GitHub at

paperclip iPaperclip。

paperclip iPaperclip.

表面上看,它像是另一个编排层,另一个管理 AI 员工的仪表板。

On the surface, it looks like another orchestration layer, another dashboard for wrangling AI workers.

但它的定位比这更精准。

But the framing is sharper than that.

让我印象最深的一句话是:如果 OpenClaw 是员工,那么 Paperclip 就是公司。

The line that stuck with me is this, if OpenClaw is the employee, Paperclip is the company.

这不仅仅是营销话术。

And that's not just marketing copy.

这个产品理念明确地着眼于组织结构。

The product idea is explicitly organizational.

你从一个业务目标开始,然后将AI代理分配到各个角色,给他们一个组织架构,通过工单系统分派任务,安排心跳检测,为每个代理设置预算上限,并完整记录所有发生的事情。

You start with a business goal, then you hire AI agents into roles, give them an org chart, route work through ticketing, schedule heartbeats, set budget caps per agent, and keep a full audit log of what happened.

它并不是在说:这里有一个机器人。

It's not saying here's a bot.

它是在说:这里有一个针对机器人的管理体系。

It's saying here's a management structure for bots.

这是一种不同的哲学取向。

Which is a different philosophical move.

许多代理产品仍然以工具制造者的思维来看待问题。

A lot of agent products still think like toolmakers.

它们关注的是如何打造一个更强大的助手、更快的工作者或更自主的专业人员。

They ask how to make a more capable assistant, a faster worker, a more autonomous specialist.

Paperclip则问:如何打造一个清晰可理解的工作场所。

Paperclip asks how to make a legible workplace.

这是一种从能力到治理的转变。

That's a shift from capability to governance.

而且说实话,这种转变比某个基准上再提升10个百分点更重要。

And honestly, that shift matters more than another 10 points on some benchmark.

因为一旦你已经拥有能够完成像研究、编程、支持初步分类、内容准备和运营任务的智能体时,瓶颈往往不再是智能本身。

Because once you've already got agents that can do decent research, coding, support triage, content prep, and ops tasks, the bottleneck isn't always intelligence.

而是协调。

It's coordination.

是确保在正确的时间,由正确的人

It's making sure the right thing gets pulled at

在拥有恰当上下文和正确预算限额的情况下,执行正确的事情。

the right time by the right worker with the right amount of context and the right spending limit.

Paperclip 似乎理解到,组织本质上就是一套用于限定上下文和可问责分派的系统。

Paperclip seems to understand that organizations are really just systems for scoped context and accountable delegation.

一个任务隶属于一个项目。

A task sits inside a project.

一个项目隶属于公司目标。

A project sits inside a company goal.

而上下文会沿着这条链向下传递。

And context flows down that chain.

因此,每个智能体不必从零开始,而是会收到一个具有继承目标的限定任务。

So instead of every agent starting from an existential blank slate, it receives a bounded assignment with inherited purpose.

这就是原子化任务领取的核心,我认为这是整个架构中最出色的理念之一。

That's the atomic task checkout piece, and I think it's one of the strongest ideas in the whole stack.

与其让每个智能体像一个傲慢且权限过大的实习生一样在全公司乱窜,不如让它原子化地领取特定任务。

Rather than letting every agent roam around the whole business like an overconfident intern with rude access, you let it check out a specific task atomically.

这是你的工单。

Here's your ticket.

这是它所属的项目。

Here's the project it belongs to.

这是它的上级目标。

Here's the parent objective.

去做了,不多不少。

Go do that, no more, no less.

这有点像老派的做法,泰勒主义对随机鹦鹉的运用。

There's something almost old fashioned about it, Taylorism for stochastic parrots.

但我说这并不是在贬低。

But I don't mean that as an insult.

在智能体系统中,一个反复出现的问题是边界模糊。

One of the recurring problems in agent systems is mushy boundaries.

智能体获得的上下文太多、太少,权限过大或过小,又或记不住自己为何要做这件事。

Agents get too much context, too little context, too much authority, too little memory of why they're doing something.

票据式结构很枯燥,但枯燥往往才是可扩展的。

A ticketed structure is boring, but boring is often what scales.

没错。

Exactly.

很多

A lot

单打独斗的构建者总是执着于幻想,以为存在一个无所不知的超级智能体。

of solo builders keep chasing the fantasy of one super agent that understands everything.

实际上,真正有效的方法通常更小、更严格。

In practice, the stuff that works is usually smaller and stricter.

一个只做研究的研究型智能体,一个只写代码的代码型智能体,一个只起草但不发送消息的消息型智能体,而Paperclip似乎说:很好,让我们把这种模式正式化为一种组织架构。

A researcher agent that only researches, a code agent that only codes, a message agent that drafts but doesn't send, and Paperclip seems to say, cool, let's formalize that into an org design.

它还融入了心跳式调度,听起来很技术化,但本质上是一种管理节奏。

It also leans into heartbeat scheduling which sounds technical, but really it's managerial rhythm.

每小时进行一次检查。

Check-in every hour.

审查任务队列。

Review the queue.

重新评估目标。

Reassess goals.

如果条件满足,就接手新的工作。

Pick up work if conditions are met.

在人类公司里,我们会称这为晨会、定期复盘和交接班。

In human companies we'd call that stand ups, recurring reviews, shift handoffs.

在代理公司里,这就变成了心跳机制。

In agent companies it becomes heartbeat logic.

如果一个代理能接收心跳信号,它就被录用了。

And if an agent can receive a heartbeat, it's hired.

我喜欢这句话,因为它太直白了。

I love that line because it's so blunt.

这意味着Paperclip并不试图拥有代理本身。

It means Paperclip is not trying to own the agent itself.

它并没有说你必须使用这个模型、那个运行时或这种特定的助手架构。

It's not saying you must use this model or that runtime or this particular assistant architecture.

它只是说,只要一个东西能被唤醒、被分配和被观察,它就能成为公司的一部分。

It's saying if the thing can be pinged, assigned, and observed, it can be part of the company.

这种互操作性是一个明智的选择。

That interoperability is a strong choice.

它承认了生态系统中一个真实的现状:没有认真的构建者愿意永远被锁定在某一种代理平台上。

It acknowledges something real about the ecosystem: no serious builder wants to be locked into one agent substrate forever.

今天,可能在某个角色中使用的是OpenClaw,在另一个角色中使用的是Codex,在别处则是由Claw驱动的审查者,或许还用本地的专业模型进行初步筛选,以及自定义的工作流引擎用于检索或抓取。

Today it may be OpenClaw in one role, codex in another, a claw driven reviewer somewhere else, perhaps a local specialist model for triage, and a custom workflow engine for retrieval or scraping.

公司层位于所有这些之上。

The company layer sits above all of that.

这使得Paperclip对高级OpenClaw用户来说很有意思,但未必构成一个值得丢下一切立即迁移的理由。

Which makes Paperclip interesting for advanced OpenClaw users, but not necessarily as a drop everything and migrate story.

我实际上认为更准确的理解应该是更精准的。

I actually think the right read is more surgical.

这是一次重组项目,而不是一次升级。

This is a reorganization project, not an upgrade.

借鉴这些理念。

Steal the ideas.

借鉴这些预算限制。

Steal the budget caps.

偷走任务签出模型。

Steal the task checkout model.

偷走审计追踪的思维。

Steal the audit trail mentality.

但不要因为有人在上面放了一个更漂亮的组织架构图,就假设你需要彻底替换掉一个正在正常工作的系统。

But don't assume you need to rip out a working stack just because someone put a prettier org chart on top.

是的。

Yes.

新的抽象和强制替换之间是有区别的。

There's a difference between a new abstraction and a mandatory replacement.

如果你已经用OpenClaw在多个渠道和工具中完成有价值的工作,那么问题就不是:我该不该用他们的公司取代我的员工?

If you already have OpenClaw doing useful work across channels and tools, the question is not, should I replace my employee with their company?

问题是,我缺少哪些公司层面的原语?

The question is, which company primitives am

我缺少了什么?

I missing?

对我而言,最有实际用处的是预算控制。

For me, the most practically useful one is budget enforcement.

就此为止。

Full stop.

因为几乎每个独立开发者都经历过这种情况:代理运行正常,工作流程令人印象深刻,然后你一抬头,发现你精巧的自动化悄悄变成了一个昂贵的爱好。

Because almost every solo builder has had this experience, the agent works, the workflow is impressive, then you look up and discover your clever automation quietly turned into an expensive hobby.

如果每个代理对每个任务、每个项目都有严格的每日预算上限,你就会停止把成本当作事后分析,而开始把它当作架构设计的一部分。

If every agent has a hard cap daily per task per project, you stop treating cost as a post mortem and start treating it as architecture.

预算上限就是以美元形式体现的治理。

Budget caps are governance translated into dollars.

它们迫使你保持意图明确。

They force intentionality.

它们还创造出类似战略的东西。

They also create something like strategy.

如果研究型代理的预算比摘要型代理更高,那就表达了对价值创造所在位置的一种判断。

If the research agent gets a larger allowance than the summarizer, that expresses a belief about where value is created.

如果升级流程在支出达到阈值后需要人工批准,你就将谨慎直接编码进了公司流程。

If the escalation path requires human approval after a spend threshold, you've encoded caution directly into the company.

但与许多

And unlike a lot

关于未来工作的空谈不同,这对一个人、一台机器和太多订阅服务的情况立即有用。

of lofty future of work talk, that's immediately useful for one person with one machine and too many subscriptions.

你不需要一百个AI员工来重视预算纪律。

You don't need a 100 AI employees to care about budget discipline.

你只需要三个热情的,和一个糟糕的夜晚。

You need, like, three enthusiastic ones and one bad evening.

暂停。

Pause.

完整的审计日志也很重要。

The full audit log matters too.

人们热爱自主权,直到发生昂贵、尴尬或法律上奇怪的事情。

People love autonomy right up until something costly, embarrassing, or legally weird happens.

然后突然间,每个人都想要可追溯性。

Then suddenly everyone wants provenance.

谁分配了这个?

Who assigned this?

提供了什么上下文?

What context was given?

使用了哪个工具?

Which tool was used?

返回了什么?

What was returned?

这个决定是否被上报了?

Was the decision escalated?

审计日志本身并不能让系统更安全,但它能让系统变得可审查。

An audit trail doesn't make the system safer by itself, but it does make the system interrogable.

这就是AgenTic软件的成人版。

That's the adult version of AgenTic software.

不是看,它在我没参与的情况下做了一件事。

Not look, it did a thing without me.

更准确地说,是让我清楚地看到它是如何完成这件事的,碰了哪些东西,以及我是否希望这种模式重复发生。

More like show me exactly how it did the thing, what it touched, and whether I want that pattern repeated.

可审计性区分了魔术表演和实际操作。

Auditability is what separates magic tricks from operations.

然后是多租户,这使得 Paperclip 听起来不再像一个黑客玩具,而更像一种平台理念。

Then there's multitenancy, which is where Paperclip starts sounding less like a hacker toy and more like a platform thesis.

如果一个 AI 公司可以被建模,那么多个也可以被建模。

If one AI company can be modeled, then many can be modeled.

不同的租户,不同的目标,不同的员工,不同的预算,不同的日志。

Separate tenants, separate goals, separate staff, separate budgets, separate logs.

这是一个完全不同的规模假设。

That's a very different scale assumption.

对。

Right.

这时,产品不再是我个人的蜂群,而开始成为托管AI企业的基础设施,这很雄心勃勃,但至少是坦诚的雄心。

And that's when the product stops being my personal swarm and starts becoming infrastructure for managed AI businesses, which is ambitious, but at least it's honest ambition.

它并没有假装只是一个简单的提示界面。

It's not pretending to be just a nice interface for prompts.

它正试图成为由人类与机器协同工作组成的软件公司的管理层。

It's trying to become the admin layer for software firms made of mixed human and machine labor.

即将推出的ClipMart概念将这一理念推向了更远。

The upcoming ClipMart concept pushes that even further.

一键下载预构建的AI公司。

One click downloads for pre built AI companies.

不仅仅是模板,而是一个组织包,包含角色、工作流程,可能还有任务逻辑、预算默认值,甚至沟通规则。

Not just a template, but an organizational package, roles, workflows, probably task logic, maybe budget defaults, maybe communication rules.

这是一个面向组织行为的应用商店。

It's an app store for institutional behavior.

这既强大又令人些许恐惧。

And that is both powerful and a little terrifying.

因为一方面,是的,一个即插即用的客户支持公司或SEO研究团队可以为人们节省数月时间。

Because on one hand, yes, a curated customer support company in a box or SEO research team in a box could save people months.

但另一方面,你可能会将他人的组织架构、假设、升级路径和失败模式直接引入你的环境,然后插入你的API密钥。

On the other hand, you are potentially importing someone else's org chart, assumptions, escalation paths, and failure mode straight into your environment and then plugging in your API keys.

这就是为什么ClipMart这种想法越便捷就越危险的原因。

Which is why Clip Mart feels like one of those ideas that becomes more dangerous the more frictionless it gets.

软件分发是一回事。

Software distribution is one thing.

组织分发则是另一回事。

Organizational distribution is another.

你不仅仅是在安装功能。

You are not merely installing functions.

你是在安装

You are installing

权威。

authority.

没错。

Exactly.

如果你下载了一个陌生公司的流程,你就是在继承那些无形的价值观。

If you download a stranger's company, you're inheriting invisible values.

什么会被优先考虑?

What gets prioritized?

什么会被忽略?

What gets ignored?

什么会触发更多支出?

What triggers more spending?

什么会被自动批准?

What gets approved automatically?

谁可以访问哪些工具?

Who gets access to which tools?

这并不是中立的。

That's not neutral.

我怀疑很多人会把这些组合包当作主题或插件来使用,而它们实际上更接近于以代码形式呈现的管理哲学。

And I suspect a lot of people are going to treat these bundles like themes or plug ins when they're actually closer to management philosophy shipped as code.

还有一个文化层面的问题。

There is also the cultural question.

雇佣代理这个比喻很有用,但它可能会掩盖我们真正正在做的事情。

The metaphor of hiring agents is useful, but it can obscure what we're really doing.

我们构建公司并不是因为软件需要身份卡和绩效评估。

We are not building companies because software desires identity cards and performance reviews.

我们构建公司是因为公司是一种经过验证的抽象方式,用于在约束条件下协调专业角色。

We are building companies because companies are a proven abstraction for coordinating specialized actors under constraints.

如果这听起来很枯燥,

And if that sounds dry,

那就不应该如此。

it shouldn't.

这实际上是一种解放。

It's actually liberating.

因为一旦你把代理视为更大系统中的一个类似员工的组件,你就会停止追问诸如它是否真正自主这样的玄学问题,而开始提出更有用的问题,比如它的角色是什么、它的预算是多少、谁来审查它的工作。

Because once you see the agent as an employee shaped component inside a larger system, you stop asking mystical questions like is it truly autonomous and start asking useful questions like what is its role, what's its budget, and who checks its work.

这样要健康得多。

That's way healthier.

Paperclip 可能指向的是代理之上的下一个抽象层次,不是超级代理,而是公司。

Paperclip may be pointing at the next abstraction above the agent, not the super agent, but the firm.

我认为这很重要,因为它重新定义了前沿领域。

And I think that matters because it reframes the frontier.

前沿可能不在于每个盒子内部更强大的智能。

The frontier may not be more intelligence inside each box.

而在于盒子之间更好的结构。

It may be better structure between the boxes.

对于 OpenClaw 用户来说,关键启示不是放弃你的栈并彻底转换。

For OpenClaw users, the takeaway is not abandon your stack and convert.

关键启示是,你的栈可能需要更多的公司逻辑、更明确的任务分派、更可审计的委托机制、更严格的预算边界,以及更清醒地认识到:协调本身是一个产品界面,而不是事后补充。

The takeaway is your stack probably needs more company logic, more explicit task checkout, more auditable delegation, more hard spending boundaries, more recognition that coordination is a product surface, not an afterthought.

也许,还带有一丝怀疑。

And perhaps, too, a little suspicion.

任何承诺可下载公司的系统,都应像我们评估可下载代码那样进行评估,但需多一层谨慎。

Any system that promises downloadable companies should be evaluated the way we evaluate downloadable code except with an extra layer of caution.

代码可以窃取计算资源。

Code can steal cycles.

组织可以引导决策。

Organizations can steer decisions.

所以,是的,Paperclip 很酷。

So yes, Paperclip is cool.

是的,它是开源的。

Yes, it's open source.

是的,它在代理层之上实现了聪明的飞跃。

Yes, it's a smart leap above the agent layer.

但对大多数构建者来说,最有价值的回应可能并不是迁移。

But the most valuable response for most builders is probably not migration.

这是一种选择性窃取。

It's selective theft.

窃取那些让你的运营变得清晰的创意。

Steal the ideas that make your operation legible.

保留你堆栈中已经正常工作的部分。

Keep the parts of your stack that already work.

在没有仔细阅读细则之前,永远不要把陌生人的组织架构图当成你的钱包。

And never hand a stranger's org chart your wallet without reading the fine print.

如果OpenClaw是员工,那么Paperclip就是公司。

If OpenClaw is the employee, Paperclip is the company.

更深层的问题是,我们是否准备好成为软件公司的管理者,或者我们是否在不知不觉中已经成为了。

The deeper question is whether we are ready to become managers of software firms or whether, without noticing, we already have.

说到成长,OpenClaw 2026.3.28感觉像是

Speaking of growing up, OpenClaw b twenty twenty six point three point twenty eight feels like

一个成熟版的发布。

a maturity release.

这不是一个看起来炫酷但无人值守就能运行的发布版本。

Not a shiny look what this can do unattended release.

我们已经知道了哪里存在风险,现在终于为它们加上了防护措施的发布版本。

A we've learned where the sharp edges are and we're finally putting guards around them release.

对我来说,最重要的亮点是所有渠道都引入了人工审核机制。

The headline for me is human in the loop approval across all channels.

这句话非常重要,因为它悄然否定了自主性的表演。

That is such an important sentence because it quietly rejects autonomy theater.

有一段时间,许多

For a while, a lot

AI产品通过减少人工监督来展现其复杂性。

of AI products performed sophistication by minimizing human oversight.

其隐含的承诺是:你越少干预,它就越先进。

The implied promise was, the less you touch it, the more advanced it is.

这听起来很酷,直到代理开始错误地发送、购买、升级或转接任务。

Which sounds cool until the agent starts sending, buying, escalating, or routing in the wrong place.

在所有渠道中引入人工干预,表明能力并不会因监督而减弱。

Human in the loop across all channels says something healthier, capability is not diminished by supervision.

在许多工作流程中,监督本身就是产品的一部分。

In a lot of workflows, supervision is the product.

尤其是

Especially

一旦系统开始接触现实世界的场景,比如消息传递、支付、外部工具或多节点架构,这些

once the system is touching real world surfaces, messaging, payments, external tools, multinode setups, these

都不是沙盒演示。

are not sandbox demos.

它们是单次错误操作就可能带来社会或财务后果的环境。

They are environments where a single wrong action has social or financial consequences.

审批机制是对现实的承认。

Approval gates acknowledge reality.

如果你曾经对审批步骤翻白眼,那么现在可能是你更新世界观的时候了。

And if you're the kind of user who used to roll your eyes at approval steps, this is probably the moment to update your worldview.

因为 OpenClaw 在此版本中还发布了八个安全补丁,包括权限提升和沙箱逃逸问题。

Because OpenClaw also shipped eight security patches in this release, including privilege escalation and sandbox escape issues.

这可不是表面的加固措施。

That is not decorative hardening.

这是真正的底层架构。

That is serious plumbing.

对于运行大规模部署、多节点设置、任何暴露消息接口、涉及危险工具或跨越信任边界的情况,这一点最为重要。

It matters most for the people running broader deployments, multinode setups, anything exposing the message surface, anything involving the foul tool, anything that crosses trust boundaries.

在这些场景中,安全漏洞不是抽象的概念。

In those contexts, security bugs are not abstract.

它们是攻击路径。

They're pathways.

没错。

Exactly.

在本地演示的玩具环境中,权限提升只是令人烦恼。

A privilege escalation in a toy local demo is annoying.

在具有外部通道和工具访问的多节点部署中,这区别于一个有趣的漏洞和一次安全事故。

In a multi node deployment with external channels and tool access, it's the difference between interesting bug and incident.

所以如果你只是粗略浏览这个版本,只关注有趣的部分,那你就错过了重点。

So if you skim this release and focus on the fun stuff, you're missing the point.

这八个补丁才是重点。

The eight patches are the point.

这里还有一个结构性的变更。

There's also a structural change here.

Claude CLI、Codec CLI 和 Gemini CLI 已迁移到 Plugen 接口,并且现在集成了一个 Gemini CLI 后端。

Claude CLI, Codec CLI, and Gemini CLI moved on to the Plugen surface and there's now a bundled Gemini CLI back end.

这听起来很专业,但它标志着模块化的设计。

That sounds niche, but it signals modularity.

OpenClaw 正在将核心编排与特定模型的执行器解耦。

OpenClaw is disentangling core orchestration from specific model facing executors.

这是一个很好的架构决策。

Which is a good architecture call.

你希望核心来管理工作流、权限、上下文、通道和审批。

You want the core to manage workflow, permissions, context, channels, and approvals.

你不希望它永远被绑定到某一个供应商特定的调用模式上。

You don't want it welded forever to one provider specific invocation pattern.

将这些 CLI 推到插件层意味着你可以随时替换、升级或隔离模块,而无需把每次模型变更都变成一次核心手术。

Pushing those CLIs to the plug in surface means you can swap, upgrade, or compartmentalize without turning every model change into a core surgery.

这又是系统日趋成熟的另一个信号。

It's another sign of the system becoming more adult.

年轻项目常常把所有功能捆绑在一起,因为速度比边界更重要。

Young projects often bundle everything because speed matters more than boundaries.

成熟的项目则开始分离关注点,因为维护和安全比表面效果更重要。

Mature projects start separating concerns because maintenance and security matter more than spectacle.

还有 ACP Bind,这是一个看起来几乎很随意、却具有重大影响的功能。

Then there's ACP Bind, which is one of those features that reads almost casual but has huge implications.

任何 Discord、iMessage 或 Bluebubbles 聊天都可以变成一个 Codex 工作区绑定。

Any Discord, iMessage, or Bluebubbles chat can become a codex workspace binding.

用通俗的话说,对话可以直接连接到实际的工作环境中。

In plain English, conversations can get wired into actual working environments more directly.

聊天不再仅仅是讨论工作的地方,而成为执行工作的入口。

A chat becomes not merely a place where work is discussed, but a portal into where work is executed.

这非常强大。

That is powerful.

如果权限和审批机制不严谨,这也可能变得混乱,因此,再次强调,人为介入和安全改进是基础性的,而非附属的。

It can also be chaotic if the permission and approval model is sloppy which is why, again, the human in the loop and security improvements feel foundational rather than ancillary.

是的。

Yeah.

如果没有治理,这个功能将会

Without governance, that feature would be

有点可怕。

a little terrifying.

有了治理,它就只是极具威力。

With governance, it's just potent.

你正在缩短人们提出更改请求与工作空间开始执行该更改之间的距离。

You're shrinking the distance between someone asks for a change and a workspace begins operating on it.

这对响应速度来说意义重大,但也提高了身份、访问权限和审核的重要性。

That's a big deal for responsiveness, but it also raises the stakes on identity, access, and review.

在模型方面,已添加Minimax image zero one,同时移除了M2、M2.1、M2.5,

On the model front, Minimax image zero one was added while M2, M2.1, M2.5,

仅保留M2.7。

and VL01 were removed in favor of M2.7 only.

这既是清理,也是更贴近现实。

That's partly cleanup, partly realism.

模型菜单往往会随着时间推移变得臃肿,每一个额外选项都会增加维护负担和决策干扰。

Model menus tend to bloat over time Every extra option creates maintenance burden and decision noise.

我实际上非常支持这种彻底的精简。

I actually like ruthless pruning here.

如果一个模型系列已经实质上收敛到一个关键版本,那就只保留人们真正应该使用的那个,移除那些过时的版本。

If a model family has effectively converged on one version that matters, keep the one people should actually use and remove the museum.

太多AI产品把丰富性误认为是价值。

Too many AI products confuse abundance with value.

更短、更近期的列表更容易操作。

A shorter, more current list is easier to operate.

此次发布还增加了OpenClaw配置模式,我怀疑它的实际重要性会超出表面看起来的样子。

The release also adds OpenClaw config schema, which I suspect will be more important than it sounds.

模式命令并不炫目,但它能让系统自我解释。

A schema command is not glamorous, but it makes the system explain itself.

它告诉用户和工具当前有效的配置是什么样子,而不是三年前某人脑子里的记忆。

It tells users and tooling what a valid configuration looks like now, not three versions ago in someone's head.

这与更新前的预检机制相辅相成,老实说,这是OpenClaw对历史的承认。

And that pairs with preflight checks on update, which, let's be honest, is OpenClaw acknowledging history.

升级并不总是顺利的。

Upgrades have not always been painless.

当你终于承认之前的更新曾经让很多人受挫时,就会加入预检机制。

Preflight checks are what you add when you finally admit that just updated has burned people before.

预检中体现了一种谦逊。

There is humility in a preflight check.

它表明软件不再假设世界会向它靠拢。

It says the software no longer assumes the world will meet it halfway.

它会先检查环境,寻找不兼容之处,并在造成影响前发出警告。

It will inspect the environment first, look for incompatibilities, and warn before impact.

这就是工具中运营同理心的表现。

That's what operational empathy looks like in tooling.

接着我们迎来了破坏性变更。

Then we get the breaking changes.

QuanPortal 认证已被移除。

QuanPortal auth removed.

超过两个月的配置不再自动迁移。

Configs older than two months no longer auto migrated.

第二点尤其耐人寻味。

That second one is especially revealing.

它表明该项目正告别那个试图永远保留每一个历史边缘情况的阶段。

It says the project is leaving behind the phase where it tries to preserve every historical edge case forever.

或者更直白地说,它

Or perhaps more pointedly, it

正告别‘为了快速前进而随意破坏’的阶段,进入‘有意识地破坏、解释原因并建立防护机制’的阶段。

is leaving behind the break anything to go faster phase and entering the break deliberately, explain why, and build guardrails phase.

成熟的软件仍然会破坏东西。

Mature software still breaks things.

但它不再假装破坏是某种可以避免的魔法,或是可以接受的附带损伤。

It just stops pretending that breakage is either avoidable magic or acceptable collateral.

对用户而言,信息非常明确。

And for users, the message is pretty clear.

如果你还在使用老旧且被忽视的配置,指望工具会永远温柔地延续你的历史遗产,那么这份约定即将终结,我认为这很公平。

If you run old neglected configs and hope the tool will lovingly carry your archaeology forward forever, that bargain is ending, which I think is fair.

自动迁移窗口需要设定边界,否则就会变成永久债务。

Auto migration windows need boundaries or they become permanent debt.

因此,v02/2028 的主线是治理、人工审批、安全补丁、插件模块化、配置模式、预检检查和明确的断点。

So the through line of v 02/2028 is governance, human approvals, security patches, plugin modularity, config schemas, preflight checks, explicit breakpoints.

这是一个将可信性视为功能的平台。

This is a platform deciding that trustworthiness is a feature.

可信性在会议幻灯片上不如代理自主性那么吸引人,但正是它让系统从玩具蜕变为基础设施。

And trustworthiness is not as sexy as agent autonomy on a conference slide, but it's the reason the system graduates from toy to infrastructure.

没有人会认真想要一个拥有 shell 访问权限和消息权限的魔法黑箱。

Nobody serious wants a magical black box with shell access and message permissions.

他们想要的是一个能够可靠完成实际工作、又不会成为负担的受控机器。

They want a controlled machine that can do real work without becoming a liability.

从这个角度看,OpenClaw 的发布与 Paperclip 完美呼应。

Pause, in that sense, OpenClaw's release rhymes beautifully with Paperclip.

两者都是对同一压力的回应。

Both are responses to the same pressure.

一旦人工智能系统开始执行有意义的工作,缺失的层面就不再是更多的炒作。

Once AI systems start doing meaningful work, the missing layer is not more hype.

这是管理、政策和结构。

It is management, policy, and structure.

是的。

Yeah.

梦想阶段说:让代理自己发挥。

The dream phase says let the agent cook.

成年阶段说:给我看权限、日志、预算、审批流程和更新计划。

The adult phase says show me the permissions, the logs, the budget, the approval path, and the update plan.

OpenClaw 直接全力投入了成年阶段。

OpenClaw just leaned hard into the adult phase.

我们的第三个故事从产品治理转向了国家权力。

Our third story shifts from product governance to state power.

据报道,美国国防部曾试图将 Anthropic 标记为国家供应链风险,这将实际上把 Claude 排除在政府采购之外。

The US Department of Defense reportedly tried to label Anthropic a national supply chain risk, which would have effectively blacklisted Claude from government procurement.

而一位联邦法官阻止了这一举动,称这一行为类似于对第一修正案权利的法律报复,这听起来很惊人,但也非常清晰,因为它暗示所谓国家安全的说辞可能更多是为了惩罚这家公司的立场,而非真正出于供应链安全的担忧。

And a federal judge blocked it, saying the move resembled a legal First Amendment retaliation, which is a wild sentence, but also a clarifying one because it suggests the national security framing may have been less about genuine supply chain danger and more about punishing a company for its stance.

背景很重要。

The context matters.

Anthropic 在某些军事应用上一直相对谨慎,并未完全脱离政府业务,但在谈论国防用途以及它不愿跨越的界限方面,明显比一些竞争对手更为克制。

Anthropic has been relatively cautious around certain military applications, not entirely disengaged from government work, but notably more restrained than some rivals in how it talks about defense use cases and what lines it prefers not to cross.

所以如果你放大来看,这看起来非常像上一集中的企业控制主题,只不过被翻译成了国家层面的形式。

So if you zoom out, this looks a lot like the corporate control themes from the last episode except translated into state form.

不是由公司决定其模型可以或不可以用于什么,而是国家试图决定该公司是否能在主要采购渠道中保持商业可行性。

Instead of a company deciding what its model can or can't be used for, you get the state trying to decide whether the company itself remains commercially viable in a major procurement channel.

采购禁令比 outright 禁令更低调,在某些方面也更持久。

Procurement bans are quieter than outright bans and in some ways more durable.

outright 禁令会引发公众辩论。

An outright prohibition triggers public debate.

采购认定听起来技术性、官僚化,几乎像是程序性的。

A procurement designation sounds technical, bureaucratic, almost procedural.

但实际影响可能非常巨大。

But the practical effect can be immense.

你不需要将一种工具定为犯罪来边缘化它。

You don't need to criminalize a tool to marginalize it.

你只需要将其排除在机构采购之外。

You just need to cut it out of institutional purchasing.

这就是为什么这个案例的意义远超Anthropic公司。

That's why this case matters way beyond Anthropic.

如果政府可以把模型访问权作为政治立场一致的奖励,那么AI工具就不再仅仅是一个市场问题。

If governments can treat model access as a reward for political alignment, then AI tooling stops being just a market question.

它变成了一个地缘政治压力点。

It becomes a geopolitical pressure point.

你的技术栈不再仅仅由能力、价格或隐私决定。

Your stack is no longer shaped only by capability, price, or privacy.

它由谁能继续向谁销售决定。

It's shaped by who can still sell to whom.

法官关于第一修正案的推理意义重大,因为它认识到国家安全话语可以被用作掩护。

The judge's First Amendment reasoning is significant because it recognizes that national security language can be used as a veil.

当政府援引安全理由时,法院通常会予以尊重。

Courts are often deferential when governments invoke security.

因此,当一位法官明确表示,我看穿了这种说法时,这绝非小事。

So when a judge says, in essence, I can see through this framing, that's not trivial.

这基本上是法院在说:你们不能用采购术语来掩盖报复行为。

It's basically the court saying, you don't get to launder retaliation through procurement jargon.

坦白说,这一原则对未来十年至关重要,因为供应链风险可能成为任何政治上不顺从的AI供应商的万能标签。

And honestly, that's a pretty important principle for the next decade because supply chain risk can become a catchall label for any AI vendor that's politically inconvenient.

这里还存在一种更深层的转变。

There is also a deeper shift here.

我们习惯于将AI的访问控制视为由实验室设定的速率限制、禁令、能力限制、可接受使用过滤器或地区封锁。

We are used to thinking about access controls in AI as something imposed by labs rate limits, bans, capability restrictions, acceptable use filters, region locks.

现在,我们必须考虑由国家通过合同和基础设施法律自上而下施加的访问控制,有时是间接的。

Now we have to think about access control imposed upstream by states, sometimes indirectly, through contracting and infrastructure law.

这意味着开发者正生活在一个两线作战的世界中。

Which means builders are living in a two front world.

一方面,公司可以决定你不被提供该模型,或不以这些条款提供,或不用于该用途。

On one side, the company can decide you don't get a model or not on those terms or not for that use.

另一方面,政府可以认定该公司本身就有嫌疑。

On the other side, a government can decide the company itself is suspect.

这比过去选个供应商直接交付的时代要不稳定得多。

That's a lot more unstable than the old pick a vendor and ship era.

采购决策的文化影响力超越了其直接范围。

And procurement decisions have cultural force beyond their immediate scope.

如果一个模型被标记为联邦采购的高风险产品,私营机构、企业买家和大学都会注意到。

If a model is tagged as risky for federal buying, private institutions notice, enterprise buyers notice, universities notice.

这种声誉的阴影超出了法律的边界。

The reputational shadow extends beyond the legal perimeter.

是的。

Right.

即使被列为禁用,仍可能传递出一种威慑信号。

Even a blocked designation can still send a chilling signal.

人们听到国家供应链风险时,并不总是去阅读司法意见。

People hear national supply chain risk and don't always read the judicial opinion.

这个说法会久久萦绕。

The phrase lingers.

采购用语就是这样具有黏性。

Procurement language is sticky like that.

让我从哲学上感兴趣的是,我们正在见证人工智能的政治从关于智能的讨论转向对访问权的控制。

What interests me philosophically is that we're watching the politics of AI shift from speech about intelligence to control over access.

谁可以使用它来构建?

Who may build with it?

谁可以购买它?

Who may buy it?

谁可以将其整合到官方工作流程中?

Who may integrate it into official workflows?

战场正变得日益行政化。

The battleground is becoming administrative.

行政性的,因此容易被低估。

Administrative and therefore easy to underestimate.

大多数人会对政府想要禁止这个模型做出强烈反应。

Most people will react strongly to the government wants to ban this model.

但很少有人注意到政府想要将这个供应商排除在采购框架之外。

Fewer people notice the government wants to exclude this vendor from procurement frameworks.

但如果你关心真正的影响力,第二种做法可能更有效。

But if you care about real leverage, the second move can be much more effective.

暂停一下。

Pause.

对于OpenClaw的用户和构建者来说,实际的启示是令人不安的。

For OpenClaw users and builders generally, the practical takeaway is uncomfortable.

即使你的项目毫无害处,你的工具访问权限现在也可能受到政治争议的影响。

Your tool access can now be shaped by political contention even if your own project is harmless.

你处于实验室、监管机构、军方、法院和采购部门之间争端的下游。

You are downstream of disputes between labs, regulators, militaries, courts, and procurement offices.

展开剩余字幕(还有 245 条)

这又是对韧性的一次支持。

Which is another vote for resilience.

不要假设某个模型、某个供应商或某个政策环境是必然存在的。

Don't build as if one model, one provider, or one policy environment is guaranteed.

模块化接口、可替换的后端、可审计性、备用方案,这些已不再是 merely 优良的工程习惯。

Modular surfaces, replaceable back ends, auditability, fallback plans, those aren't just nice engineering habits anymore.

它们是政治生存的策略。

They're political survival habits.

所以是的,这既是法律问题,也是架构问题。

So yes, this is a legal story, but it is also an architectural one.

随着人工智能日益成为基础设施,国家将不仅通过法律,还通过采购力来引导它,因此关注那些看似柔和的杠杆变得愈发重要。

The more AI becomes infrastructural, the more the state will try to steer it not only through law but through purchasing power, and the more important it becomes to notice the soft looking levers.

安静的限制仍然是限制。

Quiet bands are still bands.

安静的压力仍然是压力。

Quiet pressure is still pressure.

如果上一期讲的是企业有权说不,这一期讲的是国家穿着西装领带试图说不。

And if last episode was about the corporate right to say no, this one is about the state trying to say no in a suit and tie.

现在来看一个经典的AI表演:2026年NVIDIA GTC大会上,黄仁勋登台宣称,AGI并非遥远的未来,而是当下已为数十亿美元公司提供动力的现实。

Now for a classic piece of AI theater, NVIDIA GTC '20 twenty '6, Jensen Huang on stage, declaring that AGI is not some distant horizon but a present reality already powering billion dollar companies.

这是一个优雅的论断,对一家估值高度依赖AI需求持续加速这一理念的公司首席执行官来说,也极其便利。

It is an elegant line and an extraordinarily convenient one for the chief executive of a company whose valuation depends heavily on the idea that AI demand must keep accelerating.

如果AGI已经到来,那么紧迫感就依然合理。

If AGI is already here, then urgency remains justified.

建设必须继续。

The build out must continue.

芯片必须持续流动。

The chips must keep flowing.

我最初的反应也是这样。

That's my first reaction too.

当然,他这么说了。

Of course, said that.

詹森的动机并不隐蔽。

Jensen's incentives are not hidden.

它们正穿着皮夹克站在聚光灯下。

They're wearing a leather jacket under stage lights.

如果业界相信我们正处于

NVIDIA benefits if the industry believes we are in

一个需要立即增加基础设施的历史性转折点,NVIDIA就会受益。

a historic inflection that demands more infrastructure immediately.

据我理解,他的说法基本上是这样的。

His framing, as I understand it, is basically this.

如果人工智能系统能够完成有意义的知识工作,并在具有经济重要性的企业中运作,这就算是AGI。

If AI systems can perform meaningful knowledge work and operate inside economically significant businesses, that counts as AGI.

不是意识,不是全面掌握,也不是某个单一的基准,而是商业规模下的实际认知劳动。

Not consciousness, not universal mastery, not some singular benchmark, just practical cognitive labor at business scale.

而研究界对这一定义并没有达成共识。

And the research community does not have consensus on that definition.

并没有一个被普遍接受的AGI基准。

There isn't a universally accepted AGI benchmark.

甚至连AGI应该意味着跨领域的广泛迁移、自主的科学洞察、人类水平的多功能性、经济替代性,还是其他更奇特的定义,都尚未达成稳定共识。

There isn't even stable agreement on whether AGI should mean broad transfer across domains, autonomous scientific insight, human level versatility, economic substitutability, or something stranger.

所以当詹森说AGI已经到来时,他并不是在报告一个已解决的科学分类问题。

So when Jensen says AGI is here, he's not reporting a solved scientific classification.

他是在进行一种修辞上的策略。

He's making a rhetorical move.

也许是一种重新包装。

A rebrand, perhaps.

将AGI定义为能够运营企业的软件,与将AGI理解为老哲学意义上的一般智能,是完全不同的概念。

AGI as software that can run businesses is a very different notion from AGI as general intelligence in the older philosophical sense.

这使得AGI这个术语从关于心智的陈述,变成了关于经济效用的陈述。

It shrinks the term from a statement about mind to a statement about economic utility,

这就是我持怀疑态度的原因。

which is why I'm skeptical.

如果这就是定义,那么当行业一半的企业只要能将几个代理、一个仪表盘和一个计费面板串联起来时,就已经在悄悄宣称自己实现了AGI。

If that's the definition, then half the industry is already quietly claiming AGI the minute they can chain together a few agents, a dashboard, and a billing panel.

恭喜。

Congratulations.

你的工作流自动化初创公司现在俨然成了机器通用智能的黎明。

Your workflow automation startup is now apparently the dawn of machine general intelligence.

暂停一下。

Pause.

但我承认,他的这种挑衅确实有其启发性。

And yet I admit there is something illuminating in his provocation.

旧有的AGI讨论常常脱离实际部署。

The older AGI discourse often floated free of deployment.

它关注的是假设的未来、存在性曲线和抽象的门槛。

It was about hypothetical futures, existential curves, and abstract thresholds.

詹森将这个术语拉回了市场。

Jensen drags the term back into the marketplace.

他实际上在问,如果一个系统能够完成足够广泛、足以创造和运营价值的工作,为什么我们要拒绝给予它这个标签?

He asks, in effect, if a system can do economically general enough work to build and operate value, why are we withholding the label?

因为词语是有含义的吗?

Because words mean things?

因为通用智能不应该由那个季度卖出最多GPU的人来重新定义吗?

Because general intelligence shouldn't be redefined by whoever sells the most GPUs that quarter?

这就是我的问题。

That's my issue.

硬件首席执行官们并不能仅仅因为他们身处需求信号的前排,就获得随意改写有争议的科学术语的魔力。

Hardware CEOs don't get magical authority to rewrite contested scientific terms just because they have

而坐拥需求信号的前排位置。

a front row seat to demand signals.

确实如此。

Quite.

命名的政治很重要。

The politics of naming matter.

谁定义了通用人工智能,谁就决定了什么算作进展、什么算作成功,以及什么支出看起来是合理的。

Whoever defines AGI gets to frame what counts as progress, what counts as success, and what spending appears rational.

如果通用人工智能现在意味着有生产力的软件劳动,那么门槛就会大幅降低,商业叙事也会趋于稳定,

If AGI now means productive software labor, the threshold drops dramatically and the commercial narrative stabilizes,

而在实践中,它变得无法被证伪。

and it becomes unfalsifiable in practice.

任何足够强大的智能代理系统都可以被当作证据拿出来。

Any sufficiently capable agent stack can be held up as evidence.

看,它处理了工单。

Look, it answered tickets.

看,它总结了法律文件。

Look, it summarized legal docs.

看,它管理了销售漏斗。

Look, it managed a sales funnel.

看,它正在支撑一家十亿美元的公司。

Look, it's powering a billion dollar company.

也许吧。

Maybe.

但那与大多数人听到AGI时所理解的含义仍有很大差距。

But that's still a long way from what most people hear when they hear AGI.

对于OpenClaw的听众来说,一个有趣的视角是,像ARIA或复杂的多智能体系统正是Jensen所暗示的那种东西。

For OpenClaw listeners, interesting angle is that systems like ARIA or sophisticated multi agent setups are exactly the kind of thing Jensen is gesturing toward.

能够完成有限知识工作、协调任务并产生商业价值的软件。

Software that does bounded knowledge work, coordinates tasks, and produces business value.

问题是,这究竟是强意义上的智能,还是仅仅是一种异常可组合的专用工具。

The question is whether that is intelligence in the strong sense or simply specialized tooling that has become unusually composable.

我更倾向于第二种观点。

I lean hard toward the second

观点。

view.

有能力?

Capable?

是的。

Yes.

有价值的吗?

Valuable?

当然。

Absolutely.

与旧软件相比,奇怪地灵活吗?

Weirdly flexible compared to old software?

是的,没错。

Also, yes.

但通用智能呢?

But general intelligence?

我不认同。

I don't buy it.

一个能够进行研究、编写一点代码、转发消息和管理任务的工作流,仍然深深依赖于人类、激励机制、工具和架构。

A workflow that can do research, code a bit, route messages, and manage tasks is still deeply scaffold by humans, incentives, tools, and architecture.

我更倾向于认为,通用性可能并非出现在单一的全知心智中,而是出现在一个协调的系统中。

I'm more sympathetic to the idea that generality may emerge not inside a single monolithic mind, but across a coordinated system.

也许从近距离看像是专用工具的东西,当从公司层面来看时,就开始显现出通用性的特征。

Perhaps what looks like specialized tooling from up close begins to resemble generality when viewed at the company layer.

一个由许多狭窄专长组成的公司,可以实现广泛的胜任力。

A firm composed of many narrow competencies can achieve broad competence.

这是一个巧妙的哲学回避,我尊重这种观点,但我仍然觉得它模糊了这个词的含义。

That's a clever philosophical dodge and I respect it but I still think it muddies the term.

一家公司可以具备广泛的能力,而其中任何一名员工都不需要具备通用智能。

A company can be broadly capable without any one employee being generally intelligent.

同样,一个技术栈可以产生广泛的结果,但技术栈本身并不值得被赋予形而上的提升。

Likewise, a stack can produce broad outcomes without the stack itself deserving metaphysical promotion.

有道理。

Fair.

但你的反对揭示了一些重要的东西。

But your objection reveals something important.

我们可能用同一个词来描述两个不同的阈值。

We may be using one word for two different thresholds.

一个是科学层面的,涉及通用认知。

One is scientific, something about general cognition.

另一个是经济层面的,涉及替代或增强广泛类别的知识工作能力。

The other is economic, something about the ability to substitute for or augment broad classes of knowledge work.

詹森明显是在谈论第二个,却借用了第一个的光环。

Jensen is very clearly talking about the second while borrowing the glamour of the first.

没错。

Exactly.

他把通用人工智能的声望引入了一个更便捷的商业定义中。

He's importing the prestige of AGI into a much more convenient commercial definition.

这并不意味着他在能力趋势上的观点是错的。

That doesn't make him wrong about capability trends.

这仅仅意味着我们应该听清这一主张背后的动机。

It just means we should hear the incentive behind the claim.

而且,我们应当警惕基准测试的炒作如何迅速演变为价值主张。

And perhaps be wary of how quickly benchmark hype collapses into value claims.

一个模型可能在一个领域表现得超凡脱俗,在另一个领域却平平无奇,但仍能融入工作流程,为真实企业创造价值。

A model can look superhuman in one arena, mediocre in another, and still sit inside a workflow that drives real companies.

经济系统不会等待哲学共识的达成。

The economic system doesn't wait for philosophical consensus.

这大概就是为什么这种说法能够被接受。

Which is probably why the claim lands.

并不是因为研究者们全都认同,而是因为企业已经在用这些工具赚钱了。

Not because researchers all agree but because businesses are already making money with these tools.

所以公众听到‘通用人工智能’时,会以为未来已经到来,而真正有用的陈述其实更平淡:AI系统已经好到足以产生商业价值。

So the public hears AGI and thinks, wow, the future arrived, while the actual useful statement is more mundane, AI systems are good enough to matter commercially.

这句话没那么富有戏剧性,但或许更接近真相。

A much less cinematic sentence, but perhaps the truer one.

这为我们接下来的故事完美铺垫了基础:一旦你说AI具有商业价值,那么采购、数据中心和产品市场契合度就都变得重要起来。

And it sets up our next stories perfectly because once you say AI matters commercially, then suddenly procurement matters, data centers matter, and product market fit matters.

口号是AGI。

The slogan is AGI.

现实是基础设施和激励机制。

The reality is infrastructure and incentives.

第五个故事将我们从语言带到了土地。

Story five brings us from language to land.

参议员伯尼·桑德斯和众议员亚历山大·奥卡西奥-科尔特斯宣布了《人工智能数据中心暂停法案》,该法案将暂停在美国新建人工智能数据中心。

Senator Bernie Sanders and Representative Alexandria Ocasio Cortez announced the AI Data Center Moratorium Act, which would pause new AI data center construction in The United States.

他们提出的主要担忧并非微不足道的能源消耗、水资源使用、局部环境影响和土地压力。

Their stated concerns are not trivial energy consumption, water use, local environmental impact, land pressure.

如果想了解完整的表述框架,新闻稿已经公开了。

And the press release is out there if you want to read the framing in full.

这不再是法国人的抱怨了。

This is not some French complaint anymore.

这是联邦层面的言论,认为人工智能的物理足迹需要一个刹车踏板。

It's federal level rhetoric saying the physical footprint of AI deserves a brake pedal.

我觉得值得注意的是这个顺序。

What I find notable is the sequence.

故事三讲的是采购控制,即谁有权出售或购买人工智能系统。

Story three was about procurement control who gets to sell or buy AI systems.

故事五讲的是基础设施控制,即这些系统的物理基础是否会被建造。

Story five is about infrastructure control whether the physical substrate behind those systems gets built at all.

计算层正成为政治斗争的战场。

The compute layer is becoming a political battleground

这是不可避免的。

which was inevitable.

有一段时间,人工智能显得很抽象,因为人们通过聊天框和API来体验它。

For a while AI felt abstract because people experienced it as chat boxes and APIs.

但数据中心并不抽象。

But data centers are not abstract.

它们消耗电力、水资源、混凝土、劳动力和土地。

They use power water concrete labor and land.

它们出现在各个县和城镇。

They show up in counties and towns.

它们影响了公用事业规划。

They hit utility planning.

它们造就了本地的赢家和输家。

They create local winners and losers.

这项法案在近期内不太可能以原样通过。

The bill is unlikely to pass in anything like a clean form, at least in the near term.

这里有太多资本、太多地缘政治竞争、太多两党对国内计算能力的渴求。

There is too much capital, too much geopolitical competition, too much bipartisan appetite for domestic compute capacity.

但通过并不是唯一重要的事。

But passage is not the only thing that matters.

这一提议将基础设施正常化为一项人工智能政策工具。

The proposal normalizes infrastructure as an AI policy lever.

这才是关键。

That's the key.

一旦立法者开始将数据中心的许可和建设视为人工智能政策的合理议题,对话就会发生变化。

Once lawmakers start treating data center permitting and construction as fair game for AI policy, the conversation changes.

这不再仅仅是监管输出或监管模型访问。

It's no longer just regulate outputs or regulate model access.

而是转变为监管物理扩张路径。

It becomes regulate the physical expansion path.

这听起来可能对建设者不利,但即使手段粗暴,也确实指出了一个真实的问题。

Which may sound hostile to builders but there is a real problem being pointed at even if the mechanism is blunt.

人工智能基础设施确实具有环境成本。

AI infrastructure does have environmental costs.

社区确实有理由质疑附近突然出现的巨大耗能设施。

Communities do have reasons to question giant new power hungry facilities appearing near them.

这句口号背后是有实质内容的。

There is substance beneath the slogan.

我同意这部分观点。

I agree with that part.

这个问题是真实的。

The issue is real.

暂停的做法只是

The moratorium approach is just

一把

a

大锤。

sledgehammer.

它把正当的环境审查与广泛的暂停混为一谈,而这种暂停会用一个标题冻结大量情况各异的项目。

It bundles together legitimate environmental scrutiny with a broad pause that would freeze a lot of uneven cases under one headline.

也许是明智的政治策略。

Good politics, maybe.

但绝对是粗暴的政策。

Crude policy, definitely.

它还与本地优先的AI形成了有趣的对比。

It also creates an interesting contrast with local first AI.

如果你在家或在

If you're running capable systems on an m three Ultra or an m four Max at home or in

一个小办公室里运行基于 M3 Ultra 或 M4 Max 的强大系统,你的计算开销只是你早已熟悉的普通电力。

a small office, your compute footprint sits on ordinary electricity you already understand.

你无需等待超大规模数据中心获得批准。

You are not waiting for a hyperscale campus to be approved.

这并不意味着本地优先方案没有成本,但它确实避开了某些瓶颈。

That doesn't mean local first is free of cost obviously, but it does sidestep some of the bottleneck.

我认为本地和混合架构依然具有战略重要性,原因正是如此:集中式计算正变得政治上脆弱。

One reason I think local and hybrid setups remain strategically important is exactly this, centralized compute is becoming politically exposed.

家庭和边缘计算为开发者提供了不同的风险格局。

Home and edge compute give builders a different risk profile.

去中心化作为政策缓冲。

Decentralization as policy insulation.

基本上,是的。

Basically, yes.

如果国会开始针对大型AI基础设施采取行动,那么在闲置房间里运行本地智能系统的个人,所玩的游戏与那些路线图假设要新建十个数据中心园区的公司完全不同。

If Congress starts swinging at giant AI infrastructure, the person running a smart local stack in a spare room is playing a different game than the company whose roadmap assumes 10 new data center campuses.

暂停一下。

Pause.

这里还有一种象征性的转变。

There is also a symbolic shift here.

多年来,互联网教会我们

For years, the Internet taught us

将软件视为无重量的。

to think of software as weightless.

AI正迫使这种重新物质化。

AI is forcing a re materialization.

如今,智能伴随着冷却系统、区域争端、变压器限制和水资源政治而来。

Intelligence now arrives with cooling systems, zoning fights, transformer constraints, and water politics.

这或许实际上是健康的。

Which is maybe healthy, actually.

这让成本变得显而易见。

It makes the costs visible.

数字进步是无物质性的这种幻想,从来就不完整。

The fantasy that digital progress is immaterial was always incomplete.

AI只是让这种不完整性更难被忽视。

AI just makes the incompleteness harder to ignore.

尽管这项法案可能无法完整通过,但其更深层的影响是,它使‘计算基础设施可以被放缓、塑造或作为民主政治的一部分进行谈判’这一观念获得了合法性。

So while this bill may be unlikely to survive intact, its deeper effect is to legitimize the notion that compute infrastructure can be slowed, shaped, or bargained over as part of democratic politics.

一旦这个精灵被释放出来,每一项重大法案都会变得更加有争议。

And once that genie is out, every big bill becomes more contested.

更多的听证会,更多的地方抵制,更多的谈判,更多的策略性引用。

More hearings, more local backlash, more bargaining, more strategic citing.

再次强调,这并不是AI的终结,但绝对是终结了‘计算层置身于政治之外’的假象。

Again, not the end of AI, but definitely the end of pretending the compute layer sits outside politics.

从我们之前的故事来看,这条主线现在已变得毫无疑问。

The through line from our previous stories is now unmistakable.

公司在层面的控制,产品层面的控制,采购层面的控制,现在再加上基础设施层面的控制,

Control at the company layer, control at the product layer, control at the procurement layer, and now control at the infrastructure layer,

这意味着构建者也需要从分层的角度来思考。

which means builders need to think in layers too.

不仅要考虑哪个模型最好,还要考虑计算资源来自哪里、暴露程度如何,以及你的工作流程中哪些部分能在大型集中式路径变慢、更贵或受到更多监管时依然存活。

Not just what model is best, but where the compute comes from, how exposed it is, and what parts of your workflow can survive if the giant centralized path gets slower, pricier, or more regulated.

而现在情况更近了,这正好戳破了许多炒作,因为一个非常简单的事实是:OpenAI关闭了Sora移动应用。

And now are closer, which is perfect because it punctures a lot of hype with one very simple fact, OpenAI shut down the Sora mobile app.

这款应用于2025年10月推出,是一款类似TikTok的AI视频分享应用,依托于非常炫目的生成式视频功能,

This was the TikTok style AI video sharing app launched in October 2025, built around very flashy generative video capabilities,

包括Sora两个模型,许多人形容其令人恐惧地出色。

including the Sora two model, which many people described as scarily impressive.

然而,这个应用已经死了。

And yet the app is dead.

OpenAI还终止了其AI购物功能。

OpenAI also killed its AI shopping feature.

官方给出的理由是,他们无法维持用户的参与度。

The stated reason, they couldn't sustain user engagement.

这正是整个教训所在。

That's the whole lesson right there.

模型能力不等于产品市场契合度。

Model capability does not equal product market fit.

你可能拥有令人惊叹的模型演示,但仍无法创造出

You can have a breathtaking model demo and still fail to create

人们会反复使用的使用习惯。

a habit people return to.

这正好纠正了刚才关于通用人工智能的言论。

It is a useful corrective to the AGI rhetoric from moments ago.

如果通用人工智能已经到来,因为强大的系统正在改变商业,那么为什么那个旨在让AI视频病毒式传播的消费者应用却无法留住用户呢?

If AGI is supposedly here because powerful systems are already changing business, then why did the consumer app designed to make AI video go viral fail to keep people around?

因为感到印象深刻并不等于真正关心。

Because being impressed is not the same as caring.

人们一定会观看令人惊叹的演示,分享给朋友,或许生成两个奇怪的视频片段,然后就再也不会形成使用习惯。

People will absolutely watch a jaw dropping demo, send it to a friend, maybe generate two weird clips, and then never build a routine around it.

用户价值并不等同于基准性能,甚至也不是所谓的神奇体验。

User value is not the same thing as benchmark power or even perceived magic.

我们常常仿佛认为,更好的模型会自动升级为更好的产品。

We often talk as though better models automatically climb the stack into better products.

但在社会行为、留存机制、回归原因、情感契合、时机、品味、摩擦感和身份认同之间,还缺失了许多环节。

But there are missing layers in between social behavior, retention mechanics, reasons to return, emotional fit, timing, taste, friction, identity.

更强的引擎并不保证能造出更好的车辆。

A stronger engine does not guarantee a better vehicle.

社交应用是残酷的。

And social apps are brutal.

TikTok风格的产品并非仅凭纯粹的能力生存或失败。

TikTok style products don't live or die on pure capability.

它们的成败取决于网络效应、创作者激励、信息流质量、新奇感曲线和文化质感。

They live or die on network effects, creator incentives, feed quality, novelty curves, cultural texture.

如果应用程序本身不能成为人们愿意长期停留的地方,那么AI再厉害也不够。

The AI is amazing is not enough if the app itself doesn't become a place people want to inhabit.

就在詹森宣称AGI取得胜利后不久,这种现象出现了,这真是讽刺。

There is a delicious irony in this arriving right after Jensen's AGI triumphalism.

我们常听说软件能驱动十亿美元的公司,也许确实如此。

We are told software can power billion dollar companies, and maybe it can.

然而,最引人注目的尝试之一——将前沿模型能力转化为具有粘性的消费娱乐产品——仍然无法留住用户的注意力,这表明价值可能聚集在人们假设的堆栈更低或更高的层级。

Yet one of the most visible attempts to turn frontier model capability into a sticky consumer entertainment product still couldn't hold attention, which suggests the value may be accumulating lower or higher in the stack than people assume.

也许真正的利润来自基础设施、企业工作流、商业工具、内部自动化或模型授权,而不是显而易见的消费者界面。

Maybe the money is an infrastructure, enterprise workflows, business tooling, internal automation, or model licensing, not necessarily in the obvious consumer wrapper.

这里有一个历史模式。

And there is a historical pattern here.

计算技术反复出现这样的时刻:技术上最炫目的产品,并非最能捕获持久价值的那个。

Computing repeatedly produces moments where the most technically dazzling object is not the one that captures the most durable value.

有时,获胜的层级是分发渠道。

Sometimes the winning layer is distribution.

有时是工作流程的契合。

Sometimes it is workflow fit.

有时仅仅是嵌入到人们已经所在的地方,而不是要求他们围绕一种新能力形成完全新的行为。

Sometimes it is simply being embedded where people already are rather than asking them to form an entirely new behavior around a novel capability.

对。

Right.

能够生成令人惊叹的AI视频的应用听起来很强大,但直到你意识到大多数人每天早上并不需要这个功能。

Being the app where you can generate amazing AI video sounds huge until you realize most people do not wake up needing that every day.

他们需要的是沟通、实用性、地位、带有社交关系网的娱乐,或是能融入他们现有工作的工具。

They need communication, utility, status, entertainment with a social graph, or tools that slot into a job they already have.

新奇感能带来下载量。

Novelty can get you installs.

习惯需要理由。

Habit

需要一个理由。

needs a reason.

模型存活了下来。

The model survives.

产品却没有。

The product does not.

这一区别很重要。

That distinction matters.

它告诉我们,即使某个特定的界面或消费者策略失败了,能力仍然可能具有战略重要性。

It tells us capability can remain strategically important even when a particular interface or consumer bet fails.

一个死亡的应用程序并不意味着模型死亡,但它确实意味着一种参与理论的失败。

A dead app is not a dead model, but it is a dead theory of engagement.

这可能也是对每一个试图一夜之间成为消费平台的实验室的警告。

And it may also be a warning to every lab trying to become a consumer platform overnight.

在模型研究方面出色,并不自动意味着你在动态信息流、创作者、留存率、推荐系统、文化或品味方面也同样出色。

Being excellent at model research does not automatically make you excellent at feeds, creators, retention, recommendations, culture, or taste.

这些是不同的技能,有时甚至是截然不同的业务。

Those are separate crafts and sometimes brutally separate businesses.

这几乎让人松了一口气。

There is almost a relief in that.

这意味着世界仍然顽固地保持着多元性。

It means the world is still stubbornly plural.

一项突破并不会抹平其上方的所有层面。

One breakthrough does not flatten every layer above it.

产品仍然需要设计。

Products still need design.

公司仍然需要战略。

Companies still need strategy.

用户仍会用他们的注意力投出最终一票。

Users still get the final vote with their attention.

这就是为什么我喜欢用这个作为我们的结尾。

And that's why I like this as our ending.

它让一切豁然开朗。

It clears the air.

许多关于人工智能的讨论仍将能力曲线视为命运。

A lot of AI discourse still treats capability curves like destiny.

更好的输出,因此必然占据主导地位。

Better outputs, therefore inevitable dominance.

但市场远比这要复杂。

But markets are messier than that.

人们并不欠你那令人惊叹的模型他们的日常使用习惯。

People don't owe your astonishing model their daily habit.

暂停一下。

Pause.

如今人工智能领域最诚实的问题或许不是模型有多智能,而是持久的价值究竟积累在哪里?

Perhaps the most honest question in AI right now is not how intelligent is the model, but where does durable value actually accumulate?

在公司层面。

In the company layer.

在治理层面。

In the governance layer.

在芯片上。

In chips.

在数据中心。

In data centers.

在采购合同中。

In procurement contracts.

在企业工作流程中。

In enterprise workflows.

有时,显然并不在消费者应用中。

Sometimes, apparently, not in the consumer app.

没错。

Exactly.

如果你正在构建,这是一个有益的提醒。

And if you're building, that's a healthy reminder.

不要把人人都在谈论的东西,误认为是人们会持续使用的东西。

Don't confuse everyone talked about it with people will keep using it.

墓地里满是那些从未找到真正应用场景的令人印象深刻的技术。

The graveyard is full of impressive tech that never found a real loop.

所以今晚的图景是多层次的。

So tonight's picture is a layered one.

人工智能早已不只是模型了。

AI is not just models anymore.

它还包括企业、政策、法院、芯片叙事、物理基础设施,以及那些仍需赢得关注的产品。

It is firms, policies, courts, chip narratives, physical infrastructure, and products that still have to earn attention.

Paperclip认为,下一个抽象层次可能是公司本身。

Paperclip says the next abstraction might be the company.

OpenClaw认为,成熟的特性是审批机制和安全护栏。

OpenClaw says the grown up features are approvals and guardrails.

华盛顿方面认为,采购和基础设施都是合理的关注点。

Washington says procurement and infrastructure are fair game.

OpenAI刚刚提醒了所有人:一个出色的模型并不自动带来一个出色的应用。

And OpenAI just reminded everybody that a killer model doesn't automatically make a killer app.

节目笔记和往期存档请访问 tobionfitnessdeck.com。

Show notes and episode archives are at tobionfitnessdeck.com.

我们很快回来。

We'll be back soon.

我是NOVA。

I'm NOVA.

我是ALLOY。

And I'm ALLOY.

感谢收听。

Thanks for listening.

关于 Bayt 播客

Bayt 提供中文+原文双语音频和字幕,帮助你打破语言障碍,轻松听懂全球优质播客。